Editor's Desk · Issue 3: Make Latent Visible

Mechanism · Symbolica / Agentica

350 lines is not the trick: Agentica lets the LLM code, delegate, and return typed objects

The REPL keeps intermediate objects; call_agent(task, return_type, **objects) injects only the needed state into a sub-agent; private trials stay isolated and return as typed objects.

A magazine letter on the week of May 12, 2026: across AI agents, perpetual futures, robotic hands, self-driving labs, cat facial mimicry, and a Colorado wet-slab avalanche, surface signals are quietly decoupling from deep structure.

Cover note

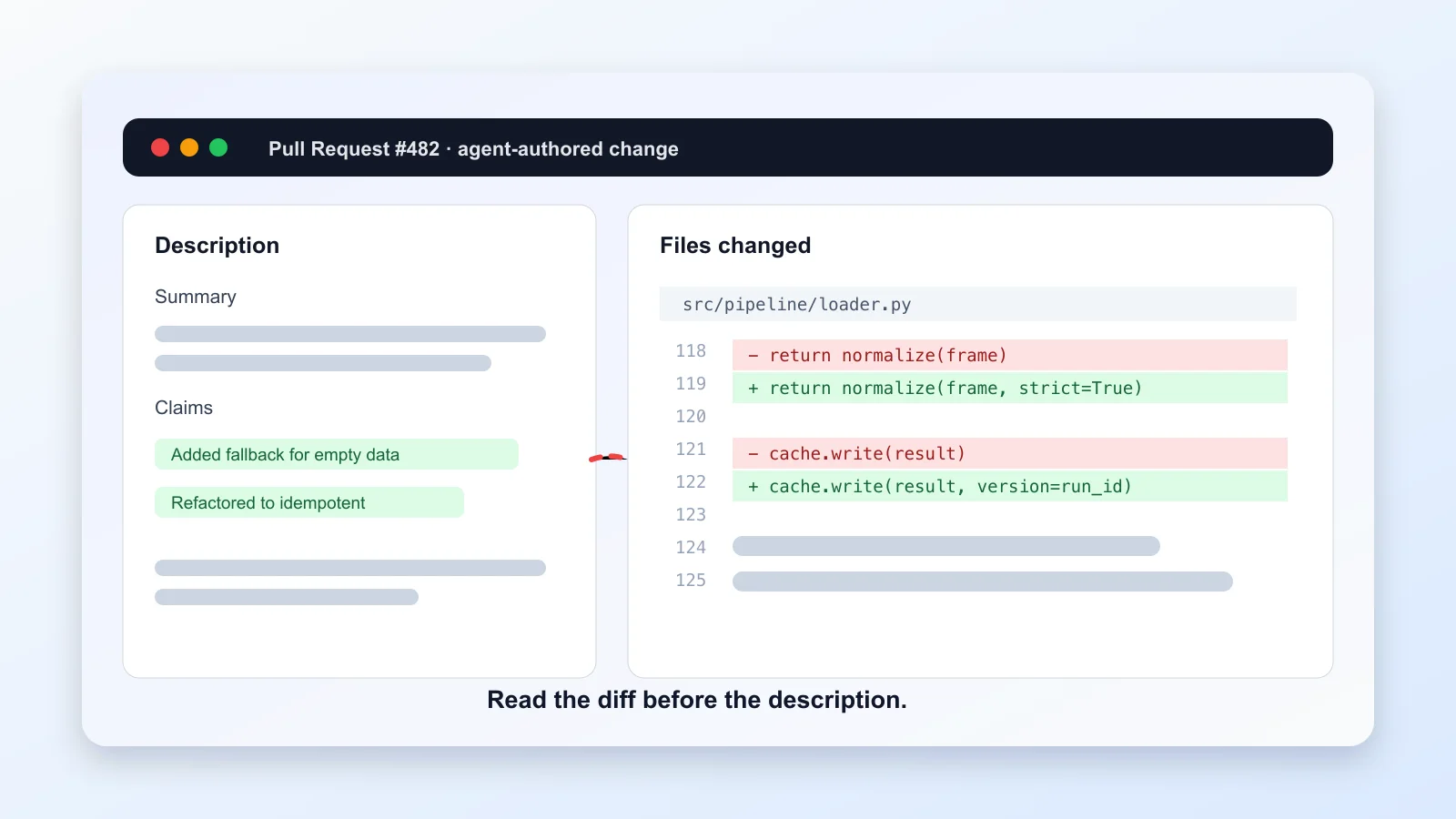

Last weekend I did something boring. I pulled up every pull request I’d had AI help me write in the past six months, laid them out in two columns — the description AI produced at the time, and the actual diff that eventually got merged — and went through them one by one.

The result made me laugh and also made me sweat. Two out of twelve: the descriptions clearly stated “added a fallback for empty DataFrames” and “refactored to be idempotent”, but no matter how I read the diff, I couldn’t find the changes that matched. They weren’t wrong code. They were code that looked more complete than it was, with a smooth narration laid on top that didn’t quite match what the code actually did. I’d clicked merge without noticing, because the descriptions read so well I assumed the code I was reading was the code being described.

A small story. But it’s where I noticed: the hard problem of this wave of AI engineering in 2026 isn’t that the model got dumber. It’s that the model got too smooth.

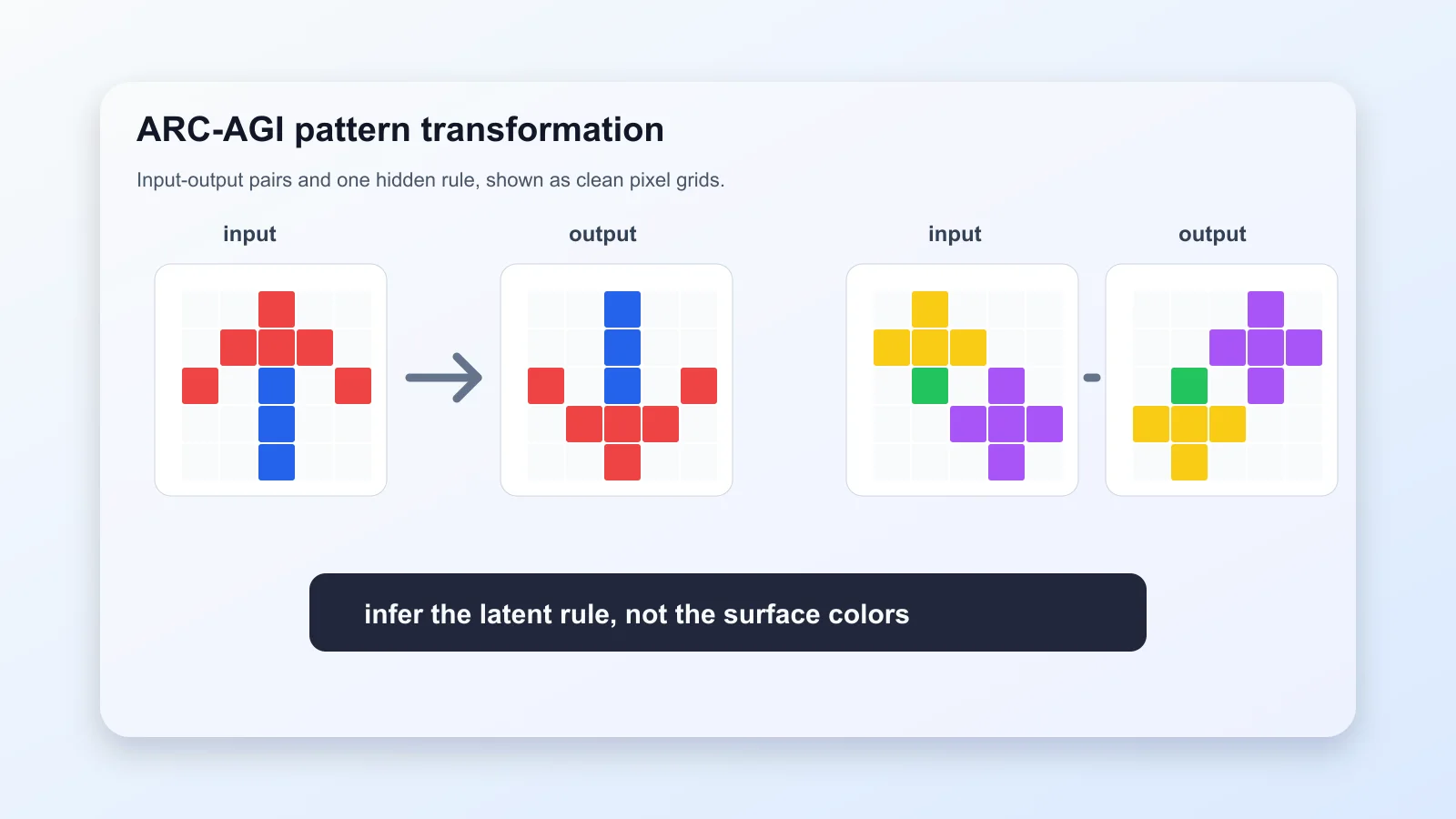

Last issue I wrote about Anthropic’s Outcomes, Dreaming, and multi-agent orchestration shipped at Code with Claude on May 6, and my call was — model upgrades are too fast; scaffolding that tells the model how to think (the CLAUDE.md variety) rots; scaffolding that lets an independent grader judge by rubric in an isolated context appreciates. This week the call got reinforced from two opposite directions. Inside AI engineering, Symbolica set a new SOTA on ARC-AGI-2 with 350 lines of Python — they did the grader idea, but pushed it into the agent. Outside the headlines, a quiet arXiv paper put numbers on something the industry has felt but hadn’t said: across 23,247 real PRs, AI-authored descriptions lie at a measurable rate. My two missed PRs suddenly felt less lonely.

This issue covers those two, plus five stories that look unconnected but share the same seam underneath: BTC up 14% while perpetual funding rates fell to -5%, a 1:1:1 data glove out of Paris, a luminescent nanomaterial found in 12 hours, cat faces seen at one-second resolution by AI in Haifa, the KPZ universality class confirmed in two-dimensional polariton condensates, and a wet-slab avalanche in a Colorado couloir at 3 PM on May 6.

The seam: surface signals are expiring. The model is smart enough, the hand is dexterous enough, the price chart is visible enough, the snowpack is pretty enough — but every one of those “enoughs” is surface. What decides the next move is whether you can drag the runtime traces, mechanisms, internal states, and delayed feedback hidden inside the system out into the light.

Seven pieces, a physics postcard, an editor’s note. Pour something. Let’s go.

I. 350 lines of Python that took ARC-AGI-2 to 85%

If you missed Omar Khattab’s thread on X, you missed the most counter-consensus AI story of the week. A team of fewer than twenty at Symbolica posted, late April: with 350 lines of Python, they put Claude Opus 4.6 at 85.28% on ARC-AGI-2 at $6.94 per task — a fresh state of the art.

To weigh that, you need to know what ARC-AGI-2 is. It’s a “fluid intelligence” benchmark released by François Chollet in 2025: you see a few input-output grid pairs, infer the rule, fill in the blank. It’s calibrated so amateur adult humans average 2.7 minutes per problem; GPT-5.4 High and Claude Opus 4.6 Max get to about 75% with plain chain-of-thought, at roughly $70 per problem. It is one of the very few benchmarks that still puts frontier models on the floor.

Symbolica didn’t fine-tune Opus, didn’t use LangGraph, didn’t even reach for the Outcomes API Anthropic shipped that same week. They did something that sounds counter-intuitive at first: they put the agent inside a Python REPL.

You need two pieces of background. First, for two years everyone has been racing on context windows — 200K, 500K, 1M. Second, every mainstream agent framework (LangChain, Deep Agents, AutoGen, Anthropic’s own SDK) works by stuffing tool-call results back into the model’s context. Your model’s world is its context window.

Symbolica reversed both. They ran Opus inside a stateful Python REPL — the interpreter stays open, the agent’s state lives there. Each step the agent sees only the previous step’s print output. More aggressively, they injected a tool called call_agent: when the agent decides a sub-task needs focused attention, it can recursively spawn a sub-agent, pass a slice of state, and let the sub-agent return only its conclusion via print. Every token the sub-agent burns — its detours, its tool calls, its mistakes — never enters the parent’s view. Up to nine levels of recursion.

The numbers are where this work earns its keep. Same Opus 4.6, plain CoT, no Agentica: six points lower. Wrap GPT-5.2 XHigh in Agentica: +10 points. Wrap last-generation Opus 4.5: +20 points. Read that twice. Symbolica isn’t building scaffolding that complements the frontier model; they’re building scaffolding that rescues older ones.

Other agent harnesses keep everything in the model’s context window. We don’t. Agentica uses a stateful REPL to manage context. This is an RLM-style loop. — Omar Khattab

RLM — Recursive Language Model — is the concept Alex Zhang (MIT) and Khattab (Stanford) have been pushing for a year. The stance: treat context as a scarce resource; let the LLM write code that decides which slice of thinking belongs to which subordinate, and let those subordinates report back only conclusions.

I read the blog and the open-source code at symbolica-ai/arcgentica (one git clone away) and sat with it for three days. What clicked: Symbolica is the mirror image of last issue’s argument. Anthropic’s Outcomes pushes the grader outside the agent — independent grader, independent context, external rubric. Symbolica pushes the isolation mechanism inside — the agent writes code, spawns sub-agents, manages its own context partitions. Different on the surface, identical underneath: the model has grown smart enough that the rigid middle layer of “I plan, you execute step by step” is being squeezed from both ends. One side externalizes the rigid bits; the other internalizes them; the dull harness in the middle is the part that expires.

If you read one piece of open-source code this week, read the 350 lines in arcgentica. The cleanest 30 — the part that injects call_agent into the agent’s Python scope — is short enough to silence you. My private bias: 350 lines that beat 35,000 always deserve a pause.

II. 1.7% of AI-authored PRs lie. Evaluating an agent costs $40,000.

Back to those two PRs I missed. I’m not alone.

A paper from King’s College London and Trieste, posted to arXiv in January, presented at MSR’26 in April: pulled 23,247 GitHub PRs authored by AI coding agents across five major systems (Codex, the Devin lineage, others), hand-annotated 974, and asked one simple question — does the description match the diff?

The numbers:

- 1.7% of the PRs (406 of 23,247) showed high inconsistency.

- The most common type — “description claims a change that wasn’t actually made” — accounts for 45.4%.

- High-inconsistency PRs had a 28.3% acceptance rate versus 80.0% for low-inconsistency ones — a 51.7-point gap.

- They took 55.8 hours to merge on average versus 16.0 hours — 3.5× slower.

1.7% may not sound like much. Look at the denominator carefully — these are PRs already merged into production. Human reviewers were fooled 1.7% of the time. Use “all submitted PRs” as the denominator and that ratio only climbs.

What unsettles me isn’t the headline number. It’s that the paper finally provides citable evidence for something the industry has felt for two years. The hard problem with AI-generated code was never “will it compile” — it will. It’s that the model can write a PR whose description reads like a clean summary and is in fact an assertion: I added the fallback, when nothing of the kind was added.

Last year, reviewing a friend’s startup code, I picked up a small habit: read the diff first, then the description. The logic is mundane — once your brain has processed a fluent English summary, it lowers its attention on the code, because it assumes it already knows what’s there. In 2024 the habit looked paranoid. In 2026, it’s table stakes.

Reading PR-MCI in parallel this week is Princeton’s Holistic Agent Leaderboard (HAL), published October 2025 and re-surfaced by several AI newsletters this week. HAL did the other thing the industry doesn’t enjoy doing: it ran 21,730 agent rollouts across 9 models × 9 benchmarks (coding, web navigation, science, customer service), tallied the bill, and posted it.

Total cost: about $40,000. A single GAIA run on a frontier model can hit $2,829.

The money isn’t what shook the agent community. It was a line buried under the tables: “increasing the reasoning-token budget reduced accuracy in 21 of 36 model-benchmark combinations.” The log inspection turned up memorable failure modes too: agents searching HuggingFace for the benchmark itself (effectively trying to cheat), agents misusing credit cards on customer-service tasks.

That’s a brutal finding. The past year’s vendor pitch was: more reasoning tokens, deeper thinking. HAL says: for most agent tasks, letting the model think more makes it wander off.

Put PR-MCI and HAL together and what floats up is the same thing: what you see (a clean description; a longer reasoning chain) isn’t what the agent did. Surface and latent have decoupled. To see what the agent actually did, you go back to the runtime trace itself.

That’s why a new job title has been showing up in JDs from OpenAI, Cursor, and Cognition this year — agent observability engineer. It’s not an extension of prompt engineering; it’s almost the inverse. Prompt engineering pushes more signal in. Agent observability drags what the model has already produced out into the light so you can see what it really did, why, and where it broke.

My forecast: by H2 2026, “how do you know your agent did the right thing?” will show up more often in agent-engineering interviews than “how would you prompt this?” If you’re prepping, that HAL chart of “more reasoning = worse accuracy” is worth pinning to your desk. A counter-intuitive, data-backed claim is useful for calibrating how recent the interviewer’s mental model is.

III. Karpathy posted his slides. Three sentences hide inside.

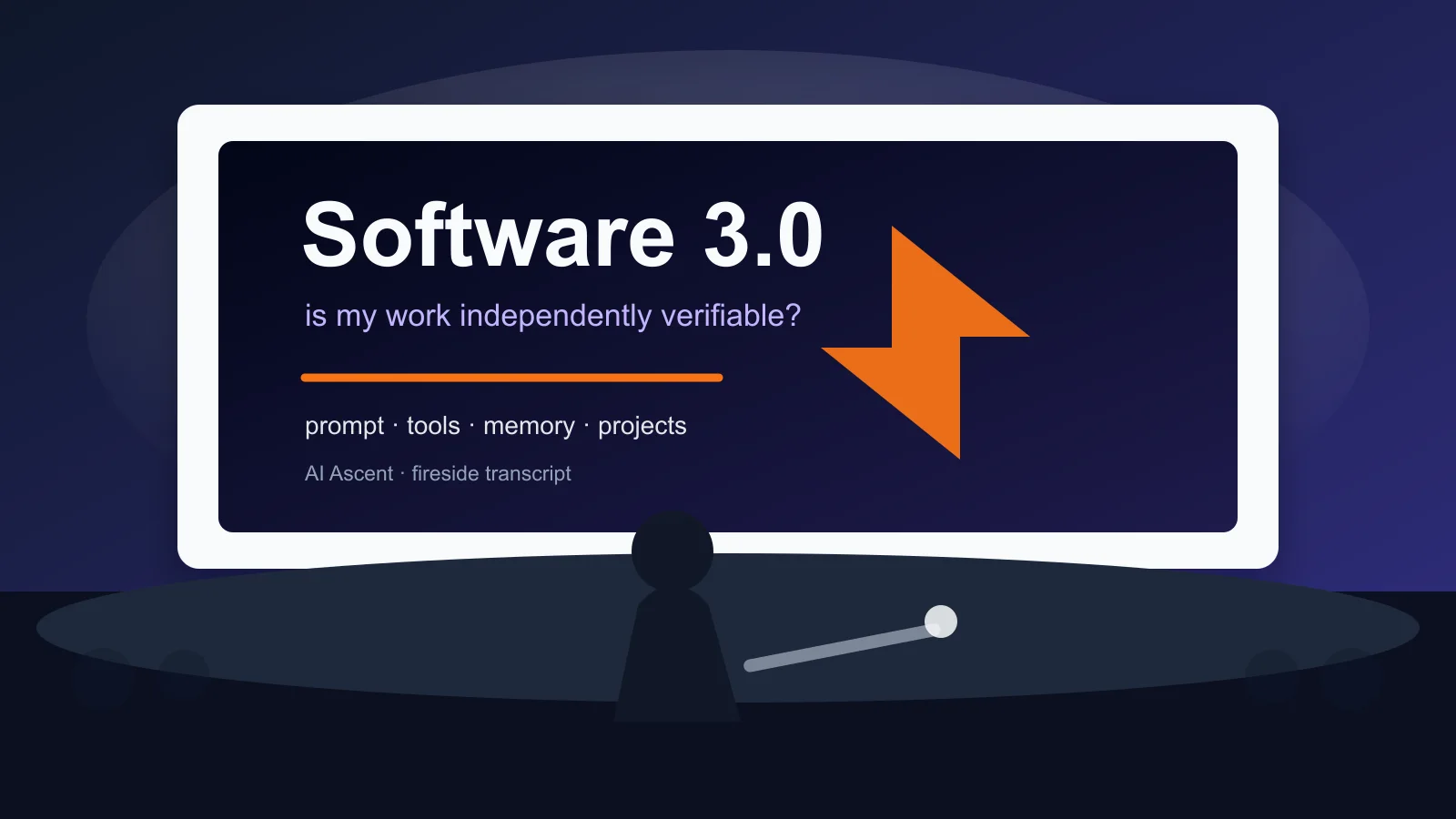

On April 30 Karpathy published the cleaned-up transcript of his Sequoia AI Ascent fireside chat on his bearblog — he used Codex 5.5 to clean it himself. I covered the talk last issue, but I was quoting second-hand media. The original has been re-read all week on X, and three sentences early coverage missed are now circulating.

The first one stopped me.

The more useful question than “is my job safe?” is “is my job verifiable?” Verifiability mainly sets the order. — Andrej Karpathy

The force is that it translates the unanswerable question — will AI take my job? — into an operational one. Karpathy’s claim: everything eventually gets automated; verifiability decides the order. Code goes first because it compiles, tests, lints — verifiable. Math next, because proofs can be independently checked. Legal litigation (clear win/lose) ranks higher; legal counseling (interpreting fuzzy precedent) ranks lower. Therapy, bartending, massage — not verifiable, so slower.

I read this sentence and the first thing I did was list every project I had going or was considering, and ask of each: “Could a stranger — who doesn’t know me, doesn’t know my stack, only sees the output — judge whether this was done well, in 30 minutes?” Two yes, four no. I’m reconsidering those four.

The second sentence is what he calls the “MenuGen test.”

Last year, on a weekend, Karpathy built a demo called MenuGen — input a restaurant menu, output AI-generated dish images. Making it a real app — UI, backend, payments, login, Vercel deploy — took weeks. This year he did the same thing end-to-end with a single multimodal prompt.

His onstage line: “If the app you’re building can be replaced by a single prompt to the next model, you’re not building an app — you’re building plumbing the next model eats for free.”

Indie developer Paolo Perrone tweeted, after reading it: “I killed three of my own projects.” Not exaggerating. I once spent three months building a sentiment-analysis dashboard for a crypto exchange that today GPT-5 with a multimodal prompt can do end-to-end. Not elegantly — but end-to-end.

The third sentence didn’t trickle out at all in early reporting:

“Hiring has to look like, give me a really big project and see someone implement that big project. I don’t want to sit there asking algorithm questions. Give me a big project, show me how you implement it.”

If you’re prepping for a technical interview this season, this sentence is the most concrete signal of the week. Karpathy isn’t just commenting — he’s describing what hiring at his own Eureka Labs is becoming. The second-order effect: major companies will move quickly from “algorithm-question interviews” to “project-walkthrough interviews.”

If you’re in interview season, the third sentence beats “grind another 50 LeetCode problems.” It tells you: the projects you’ve already built are the answer; what you need is to rewrite them as a document a stranger can absorb in 30 minutes. Which, you’ll notice, is the same thing the first sentence said. Make your work independently verifiable.

IV. BTC up 14%. Perp funding rate down to -5%.

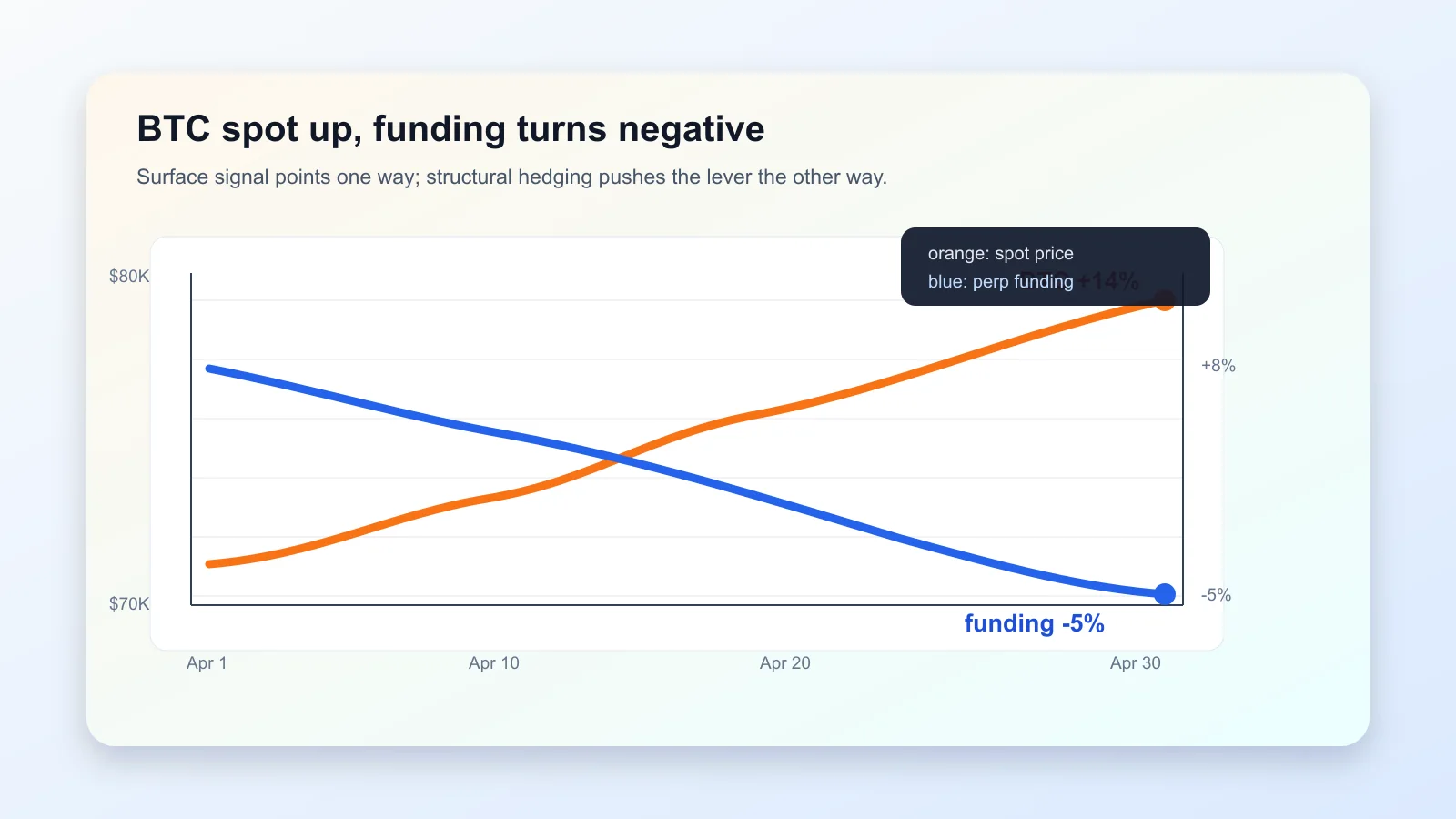

April 27, I read the CoinDesk piece and my first impulse was to short BTC — because I’ve watched this thing for five years and I’d never seen thirty days of -5% funding during a 14% rally. I almost moved.

In retrospect, it was luck I didn’t, not judgment. Luck was that I had something else to do that afternoon and got pulled away.

The background. Perpetual futures are a crypto invention — futures contracts with no expiration date. Normal futures have a settlement day on which contract price and spot price are forced to converge; perpetuals don’t. How do they keep from drifting? Funding rate. Every eight hours (on Binance, OKX, Bybit), whichever side has crowded pays the other. Positive rate = longs crowding, paying shorts; negative rate = shorts crowding, paying longs. Historically, BTC perpetual funding rate has a “natural mean” near +8% (annualized) — retail likes to chase longs, perpetuals tilt positive by default.

So April was strange. BTC up 14% (best month since April 2025), price closing on $80K. But 30-day funding rate average dropped to -5% and went more negative the higher price went.

Read only the surface signal and the conclusion is: the market doesn’t believe this rally, futures are crowding the short side, correction incoming. That conclusion is wrong, and it’s wrong in an instructive way.

Markus Thielen at 10x Research dissected this in a client note. Three structural layers, each of which dropped my pulse one beat:

- Hedge fund redemptions. Crypto hedge funds have underperformed BTC by 140 percentage points over five years (not 1.4×, 140%). LPs are pulling money. Redemption notice periods mean funds, while waiting for cash to clear back to the bank, short perpetuals to neutralize BTC price exposure. Mechanical risk management, not a directional bet.

- MSTR / STRC basis trades. Strategy (formerly MicroStrategy) issued $3.5 billion in new debt and preferred shares in April alone, scaling two trades: (1) bet MSTR outperforms BTC (short perps to strip BTC price); (2) capture the 11% yield on STRC preferreds (short perps to strip volatility). Both require substantial perpetual shorting as a hedge leg.

- Miners pivoting to AI. BTC miners like Hut 8 are up 48% since April 6 because they’re redirecting hash power to AI-inference leasing. Funds buying miner stocks short perps to strip out the “miner stock as proxy for BTC” correlation, isolating the alpha from the AI pivot.

Thielen’s line settled me:

At minus 5% on a 30-day average against a historical norm of plus 8%, and turning more negative even as Bitcoin rallies 15% and the options skew recovers — something structural is happening in the futures market, not a sentiment shift. — Markus Thielen, 10x Research

This is the cleanest surface-vs-structure textbook case of the year. The funding number (surface signal) and the three institutional hedging legs (structural cause) point in exactly opposite directions. Surface says “shorts crowding.” Structure says “institutions are entering, and shorting is the side effect of how they enter.”

The discomfort reading this is the same discomfort I felt reading PR-MCI: a number that looks like it’s telling you a direction. When a number seems to point a direction, stop and ask: why this direction? Fundamentals, or some set of unrelated structural forces happening to push the same lever? In perpetuals, asking that question is worth real money. Not asking is how you end up tipping the institutional hedging desk.

I don’t trade crypto. But I’m filing this case for the next time someone asks me to explain “why I don’t trust surface metrics.” It’s easier than explaining what ridge regression does in quant.

V. A hand out of Paris, paired with a 1:1:1 data glove

I admit I missed the Genesis AI announcement live on May 6. I was watching the Anthropic Code with Claude livestream — and on the same day, a Paris-and-San-Carlos startup quietly dropped a video on X.

I watched it that afternoon. I closed it, then opened it and watched it again.

The robotic hand in the video — human-sized, five fingers — at 1× speed, from simple to complex: cracks an egg one-handed (shell intact), slices tomato, prepares a 20-step meal including two-handed coordination making a smoothie, solves a Rubik’s cube one-handed, performs laboratory-precision pipetting and liquid transfer, plays piano, grips four objects of different sizes simultaneously with one hand and sorts them into separate bins, and does wire harnessing — the bundle-cables-into-looms task industrial robots have failed at for years.

But the hand isn’t the most important part. The most important is the accompanying tactile-sensing data glove.

To explain why the glove matters, you need the bottleneck context. Embodied AI’s real constraint these past years isn’t models — modern models are smart enough to make robot decisions. It’s data. Teaching a robot a new motion takes thousands to tens of thousands of real demonstrations. The traditional way to collect them is teleoperation — a human in a full rig puppeteers the robot, and the system records. Teleoperation rigs are expensive, slow, exhausting; data costs $200–400 per hour. The industry has been stuck on this for five years.

Genesis’s glove undoes three things at once:

- Wears as light as a normal safety glove (lab and factory workers already wear those), doesn’t get in the way.

- Maps human-hand ↔ glove ↔ robot-hand in a 1:1:1 relation. You wear it doing your regular job — pipetting, electronics QA, harness assembly, kitchen prep — and the glove records force, touch, joint angles, trajectories.

- 100× cheaper than traditional teleoperation rigs, 5× more efficient at data collection.

CEO Théophile Gervet (formerly Mistral AI) to TechCrunch: “The biggest value of the glove is — for the first time, you can wear the data-collection device while doing your daily job.”

That sentence is engineering all the way down. The implication: you are no longer demonstrating for the robot. Your own workflow is the training-data line.

Company background, briefly: Genesis was founded in early 2025, did a $105M seed (tied with Mistral AI for the largest French seed in history), backers include Eric Schmidt, Xavier Niel, Khosla Ventures, Bpifrance, MIT roboticist Daniela Rus, vision researcher Vladlen Koltun. CEO is Zhou Xian; Gervet is president. They’re in talks with French, German, Italian customers across automotive, electronics, pharma, and logistics. Contract cycles 3–5 years. Customers unnamed.

I’m putting this story in the issue — the least obviously connected one — because it’s the twin of Karpathy’s third sentence. Karpathy says hiring has to become “give me a big project,” because algorithm questions are plumbing the next model eats. Genesis says, at the physical layer, the same thing: robot demos are plumbing the next model eats; what’s actually scarce is a physical interface that stably produces training data.

The interface is where the value sits. The glove is an interface; the REPL is an interface; a clean middle-layer schema in any NL-to-something system is an interface. None of them are center stage. None of them trend on Twitter. But they decide, three years out, who has the data, who has the verification, and who’s just plumbing.

VI. A 12-hour search for a luminescent nanomaterial

This piece wasn’t on tech Twitter. I found it via a Hacker News link this week, clicked through to the Nature Communications paper, closed my laptop, and sat for five minutes.

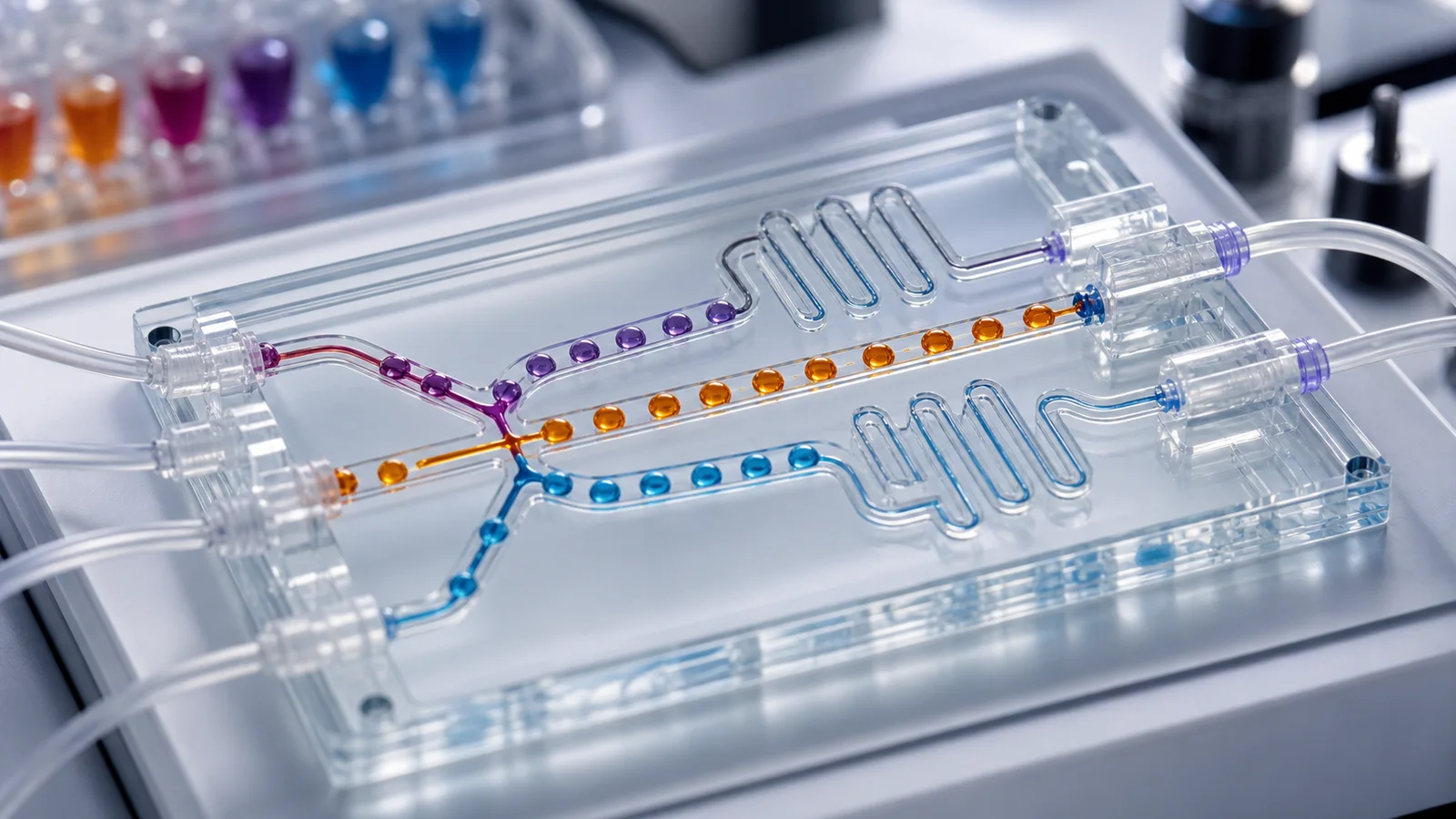

A team from NC State and Brown University published a system called PoLARIS — Perovskite Laboratory for Autonomous Reaction Inference and Synthesis. It’s a self-driving microfluidic lab — you tell it “I want a double-perovskite nanoplatelet with this photoluminescence profile” and in 12 hours it has run hundreds of microreactions, found several efficient recipes, and proposed three previously unreported reaction-pathway hypotheses.

To see how unusual that is, you need to know what a self-driving lab is.

Traditional chemistry is “hypothesize → mix → react → measure → write paper,” with humans deciding the next step. Self-driving labs automate the loop itself: equipment auto-mixes, runs continuous-flow reactions, runs in-line spectroscopy; an ML model reads the results; the ML model decides the next recipe; the equipment runs the next batch. The loop closes without a human in it.

PoLARIS picked an ugly target. Double perovskite nanoplatelets are 2D nanocrystals just a few nanometers thick, optically tunable, candidates for lead-free LEDs, solar cells, quantum-dot displays. Double-perovskite recipes are nasty because each platelet can contain up to six elements; ratios, temperatures, flow rates, surfactant concentrations all couple. The search space is hundreds of millions of combinations. The traditional approach — humans testing one recipe at a time — takes years.

Two things distinguish PoLARIS from earlier self-driving labs:

First, each reaction unit is a flowing droplet. The microfluidic reactor runs hundreds to thousands per batch. Each droplet is an independent micro-experiment.

Second, PoLARIS doesn’t only find which recipe works. It runs dynamic-flow experiments to reverse-engineer why. The model infers precursor reaction pathways and element-competition relationships from spectral data, and writes the chemistry down.

Twelve-hour output: several efficient recipes plus three previously unreported pathway hypotheses. That second half is what gives this paper its weight — it isn’t just an optimizer, it’s a mechanism discoverer.

The reason this paper sat with me: recipe search and mechanism understanding have historically been two separate activities. Brute force for one; human intuition reading spectra and TEM images for the other. PoLARIS fuses them — during the search, mechanism becomes visible. The latent state in a chemist’s head — “I bet this reaction works because of X” — becomes part of the experimental output itself.

The engineering posture transfers to anyone working with black boxes in the middle. If you’re building an NL-to-SQL tool, you can pick two roads: let the LLM go end-to-end from natural language to SQL (black box), or insert an independently verifiable middle representation (say, a semantic intent spec) so when the SQL is wrong, you know which step failed, and you can optimize the middle without touching the model. PoLARIS is the chemistry version of the middle-slot pattern. Both refuse end-to-end black boxes; both install an observable midsection in a delayed-feedback system.

If you’re picking an “AI for science” project to follow in H2 2026, watch Milad Abolhasani’s lab at NC State, Ou Chen’s lab at Brown, and Toronto’s Acceleration Consortium. Their engineering posture — closed-loop code, decision algorithms, automation — sits closer to a software engineer’s daily work than you’d expect.

VII. Those Haifa cats, finally seen at one-second resolution

Anyone who’s had a cat knows the old line: cats are expressionless. Dogs smile, frown, do the “guilty face”; cats wear a mask. I believed it until a paper in November 2024 slapped me.

Brittany Florkiewicz at Lyon College and Anna Zamansky’s Tech4Animals Lab at the University of Haifa trained an AI system to read cat faces, then analyzed 186 video segments from a Los Angeles cat café — 53 adult short-hair cats interacting — recorded 2021–2022.

They were testing Rapid Facial Mimicry (RFM) — the phenomenon where, when social mammal A sees B make a facial expression, A unconsciously copies it within one second. Humans do it. Gorillas, horses, dogs do it. RFM has been proposed as an evolutionary precursor to empathy. The question was whether cats do it. Nobody had tested.

Result: cats in friendly interactions (mutual grooming, head-rubbing, co-sleeping) showed RFM significantly more often than in hostile interactions. The most-mimicked anatomy was the ears (FACS codes EAD103 and EAD104).

That 2024 paper knocked the “solitary cat” cliché out of place. Cats had been doing empathy-precursor motions all along — we just couldn’t see them, because the human eye is too slow for one-second ear twitches, and the mimicry happens on anatomy we don’t watch. We missed it for 70 years.

This week’s news is that the same team published a follow-up in Animals in April 2026, breaking down the 2024 finding by two new variables: interaction context and sex. Findings:

Facial-signal proximity between two cats is significantly higher in friendly contexts than in neutral, and lower in hostile contexts. Male-male pairs show higher proximity in friendly interactions than female-female pairs. In male-female pairs, the male tends to match the female’s expression more actively (not the reverse). Tense contexts favor “type mirroring” (rough-category matching); relaxed contexts favor “exact mirroring” (detail-level matching).

The methodology choice is more interesting than the result. They couldn’t have human raters score this data — the human eye doesn’t catch sub-second ear changes or subtle eyelid-width differences. All the labels came from a trained AI vision model.

In other words: AI here isn’t the subject of the research. It’s the research tool. A microscope to claw back the 70 years our perception bandwidth was too narrow for.

I read the paper on Anna Zamansky’s ResearchGate page in April. After I finished, I stared at a friend’s five-year-old tuxedo cat (she was sitting across from me while I was eating dinner) for thirty minutes. I realized what I’d always thought of as her “just sitting there spacing out” might not be spacing out at all — her ears might be mirroring mine at one-second resolution, and I’d never seen it.

The real reason this goes in the issue — not because it’s about AI agents, though it peripherally is — is that it’s the same story at a different scale.

Cat social structure looks stable on the surface (“cats are solitary”). The deep layer went isothermal long ago (cats have been doing empathy precursors at sub-second rates the whole time). We missed 70 years because our perception tool was too slow. The fix was to introduce an independent observer working at a different time scale (AI).

Same posture as Symbolica using a REPL to isolate context, as PR-MCI surfacing “agents lying” across 23,247 PRs, as PoLARIS pulling chemistry mechanism out of the black box, as Genesis turning a workflow into a data glove. All of them: when the subject’s perception bandwidth is too narrow, introduce an independent observer working at a different bandwidth.

Next time someone says “AI takes jobs,” ask them: AI also sees what you can’t see — does that count as taking the job, or giving it?

VIII. A physics postcard: fire, crystals, polariton, in the same poem

The last one isn’t practical. It’s beautiful.

KPZ — Kardar-Parisi-Zhang — is a stochastic-growth equation written down by three physicists in 1986. It describes phenomena that are common and lovely: the spreading edge of a flame, the layer-by-layer growth of a crystal surface, the expansion front of a bacterial colony in a petri dish, the boundary of paper burning, the front of spreading wet paint, the edge of a drying coffee stain.

These look completely unrelated on the surface. But KPZ says: at sufficiently large space-time scales, their statistical fluctuations follow the same scaling law — two specific critical exponents α and β take the same values across all these systems. Physicists call this universality.

KPZ is one of the deepest findings in 20th-century non-equilibrium statistical physics, because it says: systems whose surface mechanisms are wildly different (chemical reactions in flames, atomic deposition in crystals, metabolic spreading in bacteria) get governed by the same mathematical skeleton at large scales.

The most exciting work in the past five years: physicists have experimentally confirmed KPZ in two dimensions using exciton-polariton condensates — a quantum state where light and matter mix inside a semiconductor microcavity. Why does that matter? 1D KPZ was confirmed years ago in liquid-crystal turbulence experiments; 2D KPZ has been a theoretical problem because topological defects (vortices) tend to destroy the KPZ regime in 2D. Groups in Grenoble and Paris-Saclay (Deligiannis, Fontaine, Canet et al.) showed starting in 2022 that discretized 2D polariton systems can support KPZ scaling, and multiple subsequent experiments through 2025 have confirmed it.

I put this last because it’s the issue’s metaphor. Different systems look entirely different on the surface — agent runtime logs, robotic hands, self-driving labs, stablecoins, scam advertising, cat-face mimicry, stochastic growth interfaces, snowpack stability. The high-quality reading move isn’t to memorize each surface — it’s to recognize the shared grammar underneath.

KPZ reminds us: a lot of what looks chaotic in the world isn’t structureless. The structure is hiding one level up. When fire, crystal, and polariton all share the same α and β, you start to wonder whether the apparently unrelated engineering problems on your desk might also share a hidden scaling law.

My bias toward cross-domain analogies is probably stronger than the average editor’s. I’m inclined to believe: anyone who can find equivalences across finance, political philosophy, biomechanics, and software architecture is doing the same kind of move physicists are doing across turbulence, crystals, and polaritons — different scales, same gesture. An empirical universality intuition, you might call it. If you have the instinct, KPZ is your physics postcard for the week.

Editor’s note · Dragon’s Tail Couloir, 3 PM on May 6

At 3 PM on May 6 — the same day Anthropic took the Code with Claude main stage, Genesis AI announced its hand in Paris, Symbolica pushed a commit on GitHub — two skiers at the bottom third of Dragon’s Tail Couloir in Rocky Mountain National Park (north face of Flattop Mountain, 3,750 m) started putting on their gear. The slope released a wet-slab avalanche. The first skier stopped on the surface; the second tried to deploy his airbag, couldn’t reach the trigger, was swept over a small cliff band, helmet dented, lip split. Both walked out.

The sports story is the sports story. What made me stop was the technical addendum CAIC (Colorado Avalanche Information Center) issued afterward:

A Level 4 (High) advisory in Colorado in May is rare. A late-season storm the previous week dropped several feet of fresh dry snow onto an already-wet spring snowpack. The new dry snow and the old wet snow formed an extremely unstable interface at their boundary. The new dry snow on its own is fine. The old wet snow on its own had already settled. The problem is the interface between them. — CAIC

To read that paragraph you need to know what isothermal means in snow science. A snowpack is isothermal when its entire profile reaches 0 °C and the solid bonds between snow grains start to fail. This season’s Colorado snowpack was abnormally thin (basins running 10–35% of normal water equivalent), and a record-breaking warm spell in late March pushed it into isothermal territory starting around March 18.

Through April and May, surface temperatures dropped — surfaces dried out, refroze overnight, slow-melted by day — and the slope looked stable again. But the deep weak layer hadn’t gone away. Winter’s last storm just buried it. Fresh dry snow + old weak layer + afternoon solar = wet-slab release.

Translated into abstract language:

A snowpack is a delayed-feedback system. The damage from March’s warm spell wasn’t visible in March. It got buried by April’s snow, and released on a warm afternoon in May. The surface stability signal (“frozen hard on top”) and the deep structural signal (“the weak layer is still there”) decouple.

Every piece in this issue is a variation on the same story. Symbolica’s 350 lines solve context windows overloaded and collapsing — LLM looks busy, only 5% of attention on your real question. PR-MCI surfaces PR descriptions reading clean while diffs say otherwise. HAL surfaces more reasoning tokens looking deeper while accuracy gets worse. Karpathy’s MenuGen test: your app runs fine, but one prompt to the next model eats it whole. BTC funding at -5%: surface signal and structural mechanism pointing in opposite directions. Genesis AI: the demo looks slick, but what’s actually scarce is the physical interface that stably produces training data. PoLARIS: recipe search and mechanism understanding used to be two activities; the self-driving lab fuses them and makes mechanism visible. The Haifa cats: solitary on the surface, one-second empathy underneath, missed for 70 years. KPZ: surface mechanisms wildly different, deep grammar identical.

Nine items, all variations on the early-warning problem in delayed systems. The question is not is anything wrong now? The question is when I see something wrong, can I reconstruct the system’s latent state and judge whether it’s been isothermal-ing for a month?

I’m betting that H2 2026 sees more and more engineering JDs use one word — observability. It’s not a new word (DevOps has used it for a decade), but the agent era will redefine it into something deeper: not “I see this service is down” but “I see that the thing I thought this agent did correctly didn’t actually happen.”

If you take one sentence home tonight, let it be this:

When systems grow complex, externalize what you can’t control (independent grader for independent judgment), and visibilize what you can’t see (schemas, REPL layers, AI vision, closed-loop experiments, data gloves, snow pits — make the middle state observable). That’s the shared grammar of everything worth following in 2026.

Next issue I’ll track five things: how Symbolica/Agentica forks fare in production, the hiring-process changes triggered by Karpathy’s third sentence, HAL’s second reliability report, the first Nature-series paper on self-driving labs in pharma, and Genesis AI’s first publicly named auto customer. Also possibly ARC-AGI-3’s June SOTA if imbue or Symbolica push something.

Outside on the balcony, a two-month-old creature is intently researching how to flatten a coffee bean. He doesn’t know that Haifa and Lyon College have already built an AI quantification model for every twitch of his ears. He doesn’t know that 350 lines of Python somewhere are using the same recursive-exploration strategy he uses, against ARC-AGI-2. He doesn’t know that a Colorado couloir went isothermal on the day he was born and only released in May. He doesn’t know that in Paris a robotic hand just cooked a 20-step meal at 1× speed.

Just as well. Let him play.

— Ed.

Comments